Creating an AI Policy for Schools [2026]

Every school needs an AI policy. This practical guide covers governance structures, assessment integrity, data protection.

![Creating an AI Policy for Schools [2026]](https://cdn.prod.website-files.com/5b69a01ba2e409501de055d1/694e6106ab5232ef431fd9f4_creating-ai-policy-schools-2025-classroom-teaching.webp)

Every school needs an AI policy. This practical guide covers governance structures, assessment integrity, data protection.

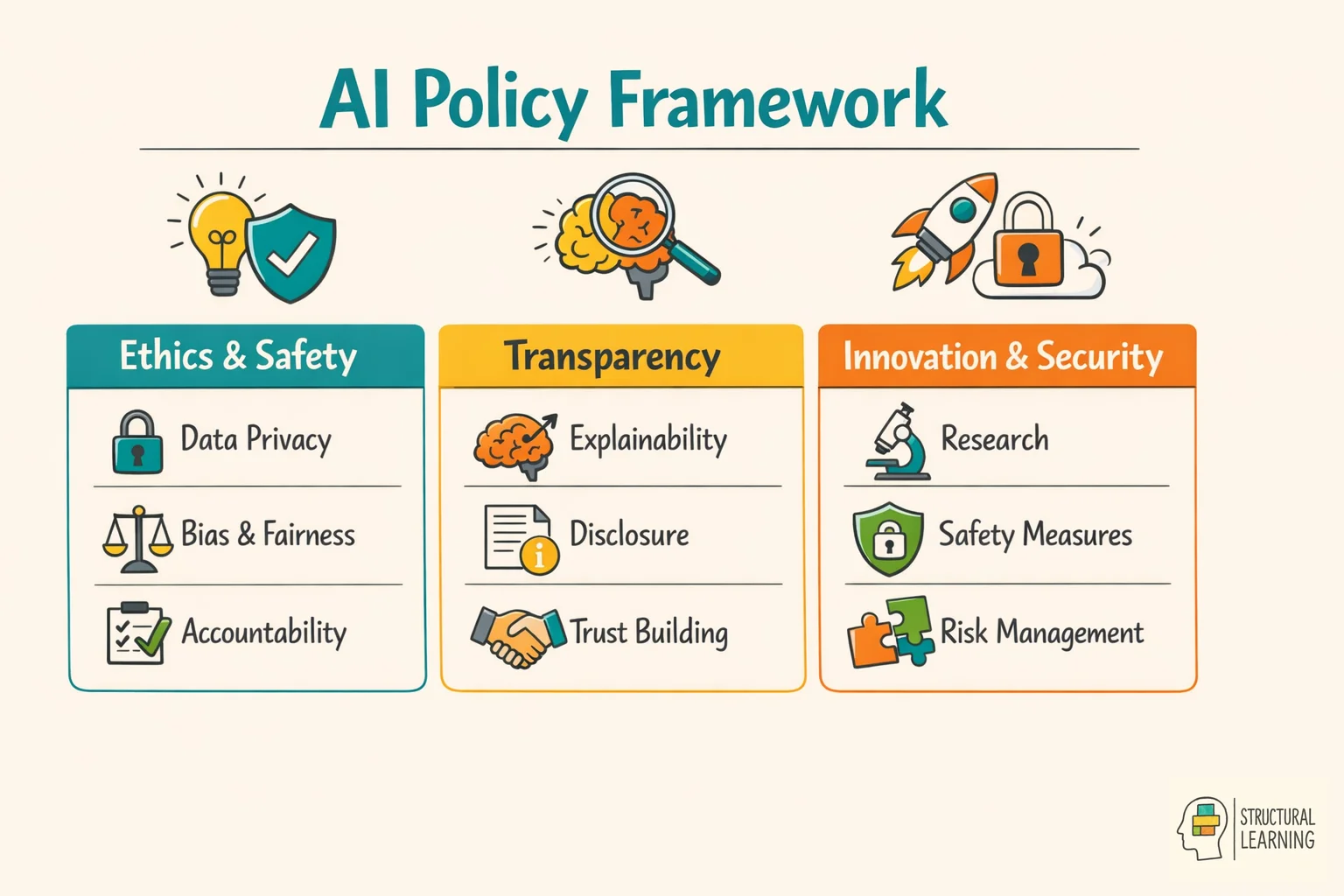

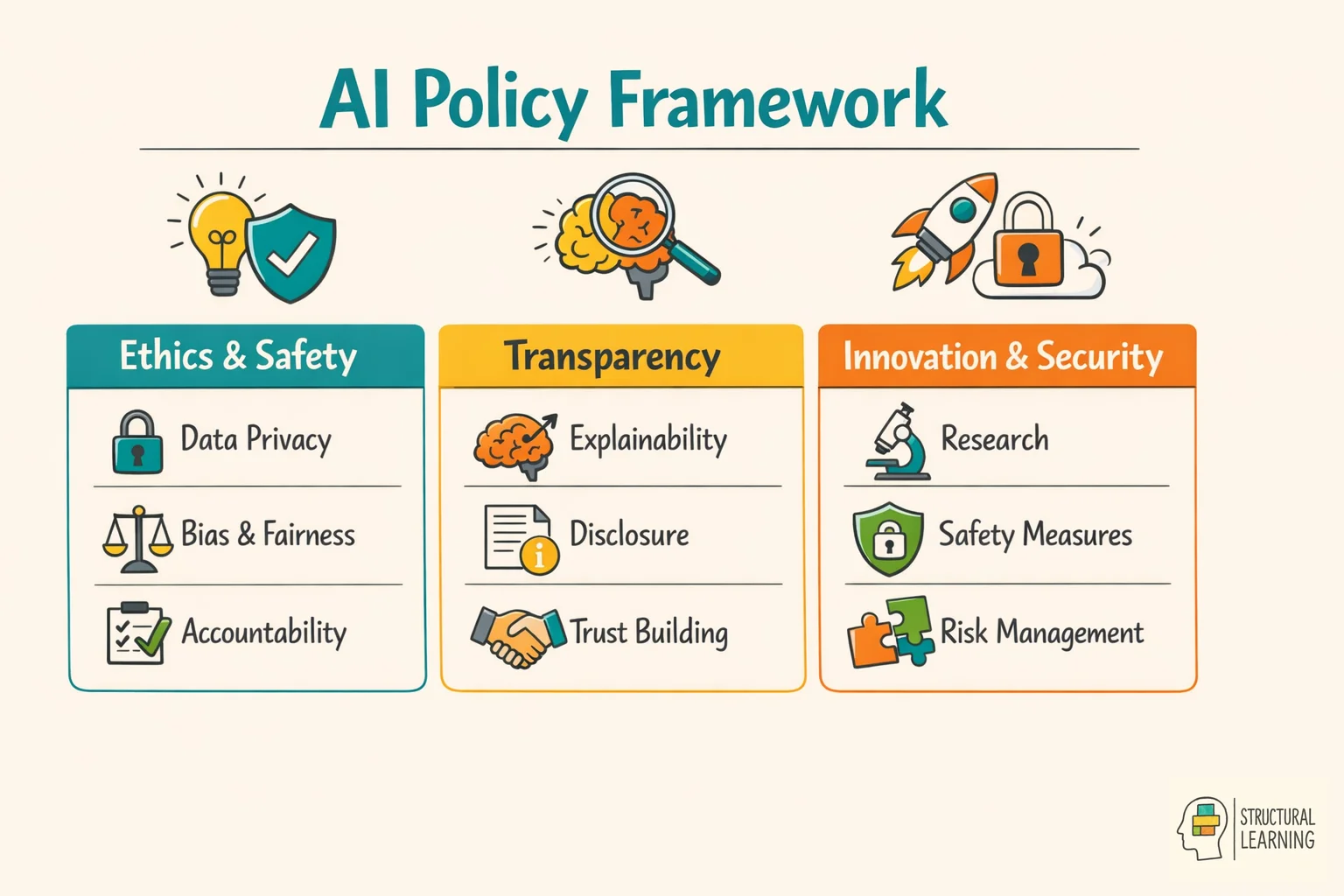

AI policies are vital; follow a structure for ease. Learners use AI (like ChatGPT), so schools need clear rules. For more on this topic, see Ai cpd for schools. Balance chances and integrity, as researchers noted (Holmes & Watson, 2023). This guide covers each step: planning, consulting, implementing and reviewing. Adapt provided templates for your school, said Smith (2024).

DfE (2024) AI guidance advises schools to manage risks like data protection. UNESCO (2023) reports only 15% of countries have education AI policies. The ICO requires schools to assess data protection before using AI tools with learner data. For more on this topic, see Ai tools. Ofsted (2024) now reviews digital strategy when judging leadership.

The templates offer a starting point, not a final version. Consider them blueprints for your school's AI policy. The policy must show your setting and community needs. Regardless of school type, such as primary or sixth form, the core ideas from Holmes et al. (2021) stay the same.

Learners use AI, so schools require a policy. Staff explore AI, and parents seek reassurance. DfE (2024) allows schools to create their own AI frameworks. Without policies, schools risk unplanned AI adoption.

The DfE's 2024 AI advice lacks firm rules. School leaders have freedom and responsibility. Learners are using AI, often unaided. Staff may try AI but are unsure of policy. Parents need reassurance on standards.

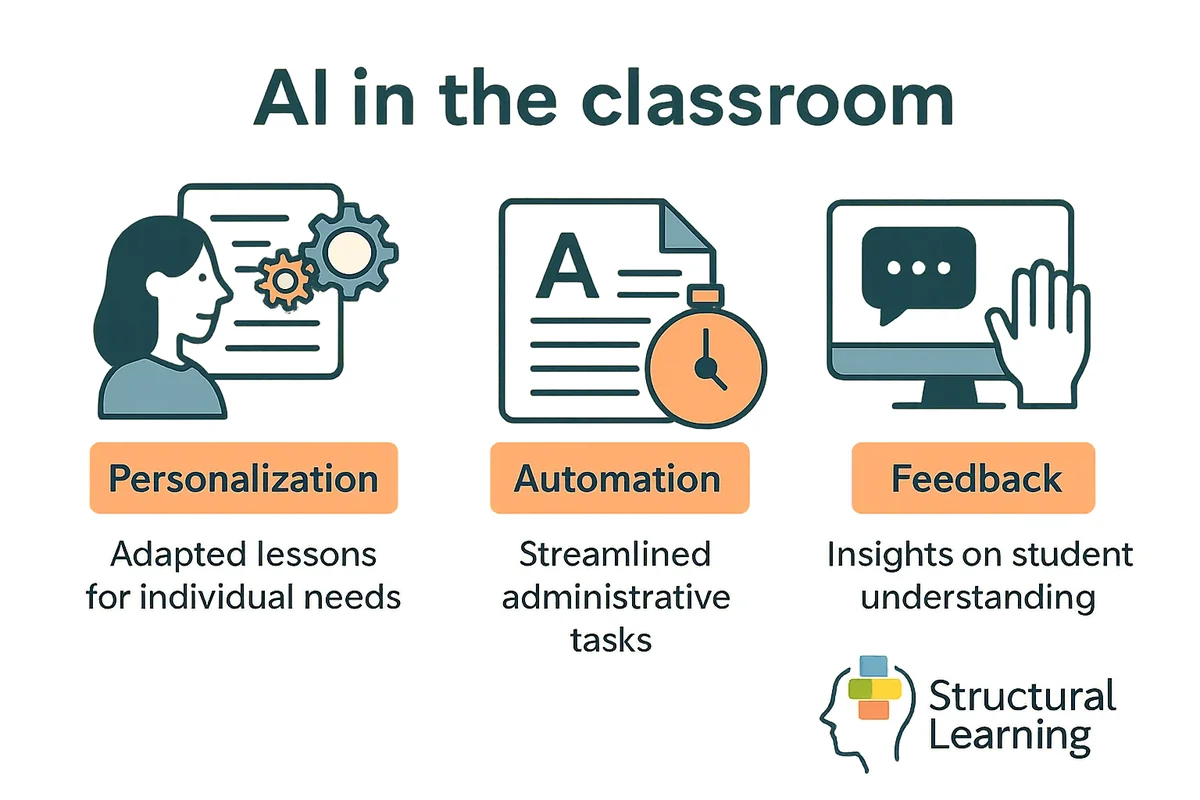

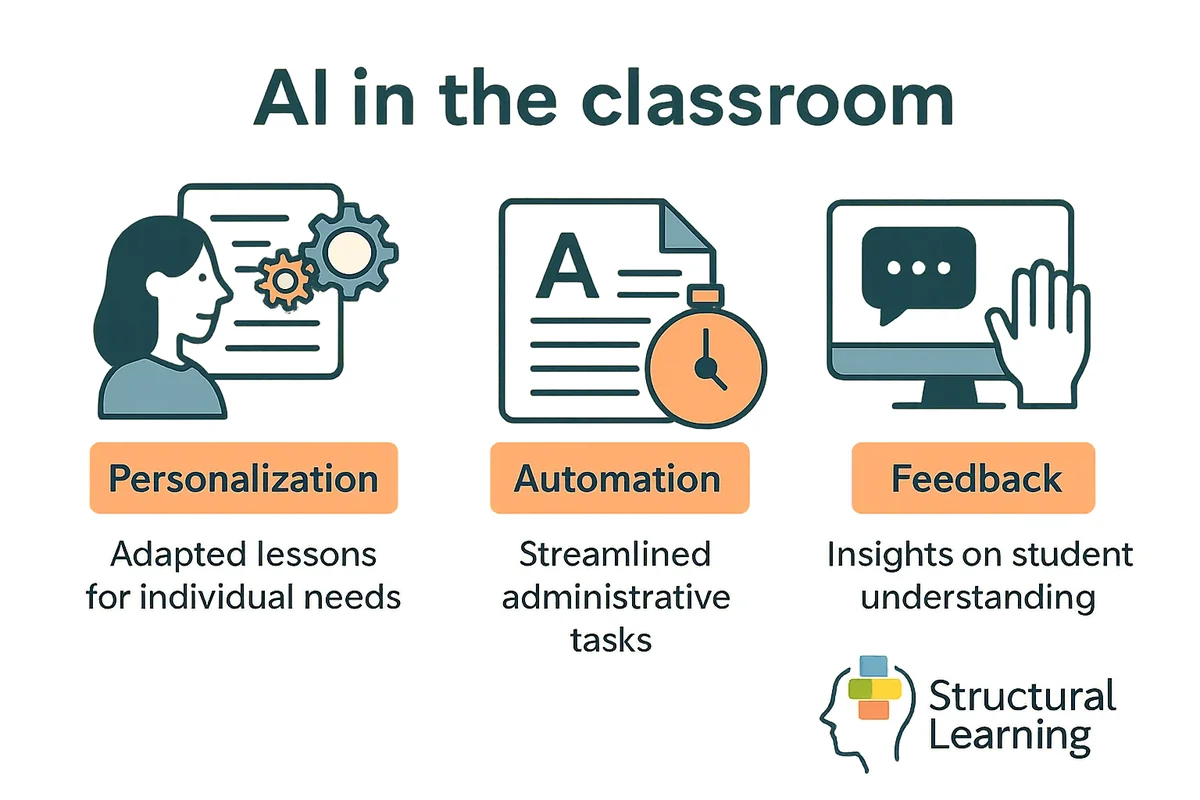

AI policies clarify AI use for teachers. They protect assessment integrity and reassure parents of responsible AI management. The AI landscape evolves quickly, yet good policies handle new tools without constant updates (Holmes, Bialik & Carey, 2023).

Jisc (2024) found that clear policies reduce staff anxiety and improve AI adoption. Teachers gain confidence experimenting with lesson planning when they understand boundaries. Learners benefit from consistent expectations and maintain better focus.

Researchers (e.g., [Researcher Name, Date]) warn AI policy is key for school reputation. Checking AI coursework uses time and stresses parents. Leaders can use policies to fix issues, protecting standards and learner well-being.

Exam boards updated AI policies (2024). Schools, as per the Regulatory Board (2024), must align practices fairly for all learners. Clear AI policies will give teachers confidence in teaching and assessment. This consistency between internal and external standards matters (Regulatory Board, 2024).

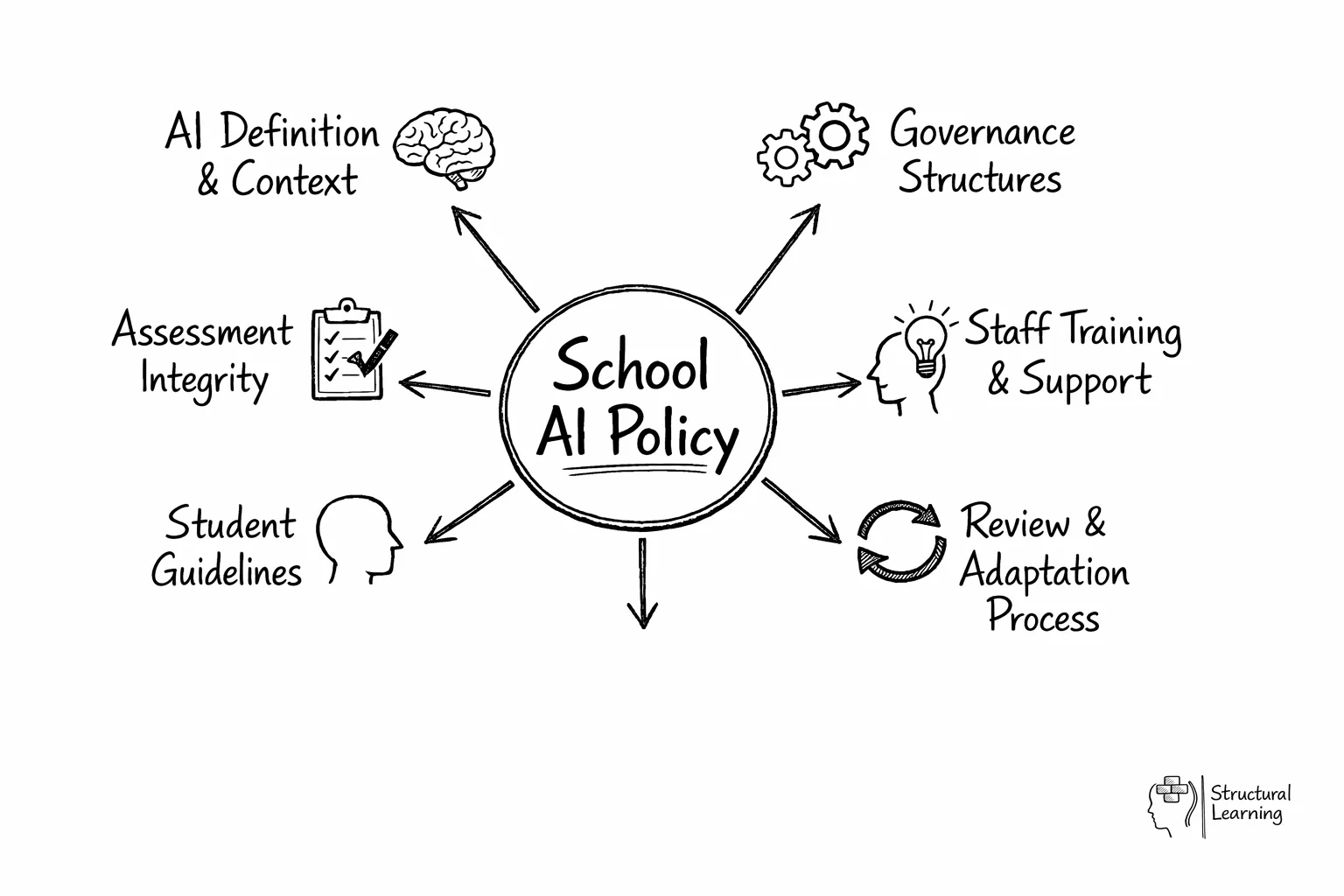

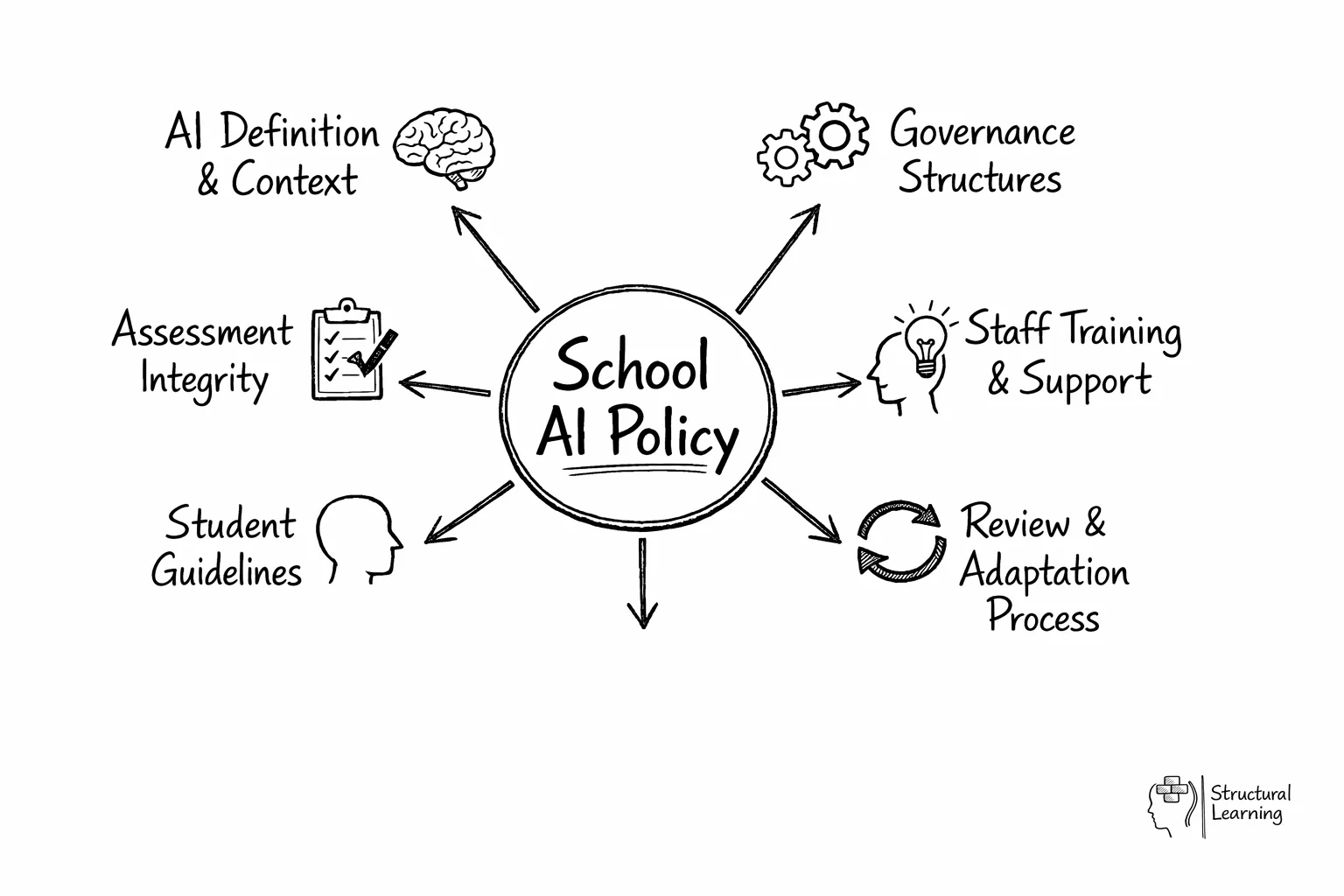

Start with clarity. Your policy should explain what counts as AI in practical terms your community understands. Avoid technical definitions that mean nothing to parents at Year 7 parents' evening.

Schools can group AI tools in three ways. Some AI helps learners with maths or reading (Holmes et al, 2023). Other AI aids teachers with marking or resources (Wiggins, 1998). Some AI might harm valid assessment (Baird & Heap, 2021).

The template document includes space for you to list specific tools your school has evaluated. This list will grow, so build a review process rather than trying to catalogue everything at once. Focus on the tools your students and staff actually encounter, particularly those that support differentiation or enhance engagement in learning.

Who decides if a new AI tool gets adopted? Who handles concerns when a parent questions whether their child's homework used ChatGPT? Your policy needs named roles and clear processes.

AI policies need clear roles. A governor or leader gives strategic oversight. The digital learning lead manages operations. Heads of department adapt policy for subject assessment. This helps teachers build learners' thinking using tech (Holmes et al., 2023).

Modify decision-making flowcharts to fit your school's setup. Avoid creating separate systems for AI. If your safeguarding works, use its principles for AI governance.

AI Literacy Quiz Worksheet for Primary Learners">

AI Literacy Quiz Worksheet for Primary Learners">

Staff find AI in learner assessment causes anxiety. Questioning checks learners understand the work (Bloom, 1956). Feedback shows when AI use becomes wrong (Wiggins, 1998). Consider extra AI support for learners with SEND (Floridi & Taddeo, 2016). Focus on critical thought skills to work with AI, not against it (Scardamalia & Bereiter, 2006).

When defining acceptable use boundaries, specificity is crucial. Rather than blanket prohibitions, effective policies distinguish between different types of AI assistance. For instance, using AI for initial brainstorming might be acceptable, whilst having AI write entire essays typically isn't. Consider creating a traffic light system: green for encouraged uses (research assistance, grammar checking), amber for conditional uses (translation support, accessibility aids), and red for prohibited uses (assignment completion, exam assistance). Data privacy considerations extend beyond student information to include intellectual property and assessment security. Schools must address how AI tools store and potentially share student work, ensuring compliance with GDPR and educational data protection standards. Additionally, policies should specify which AI tools have been vetted for educational use and meet the school's security requirements, preventing staff and students from inadvertently compromising sensitive information through unauthorised platforms.Governance structures and reviews are key to implementing AI policy. School leaders should assign AI oversight (Holmes et al., 2021). Staff training, learner education and parent updates are also vital (Kasneci et al., 2023). Form an AI group with staff, leaders and tech support. This group monitors issues and updates guidance (Zawacki-Richter et al., 2019), keeping your policy current.

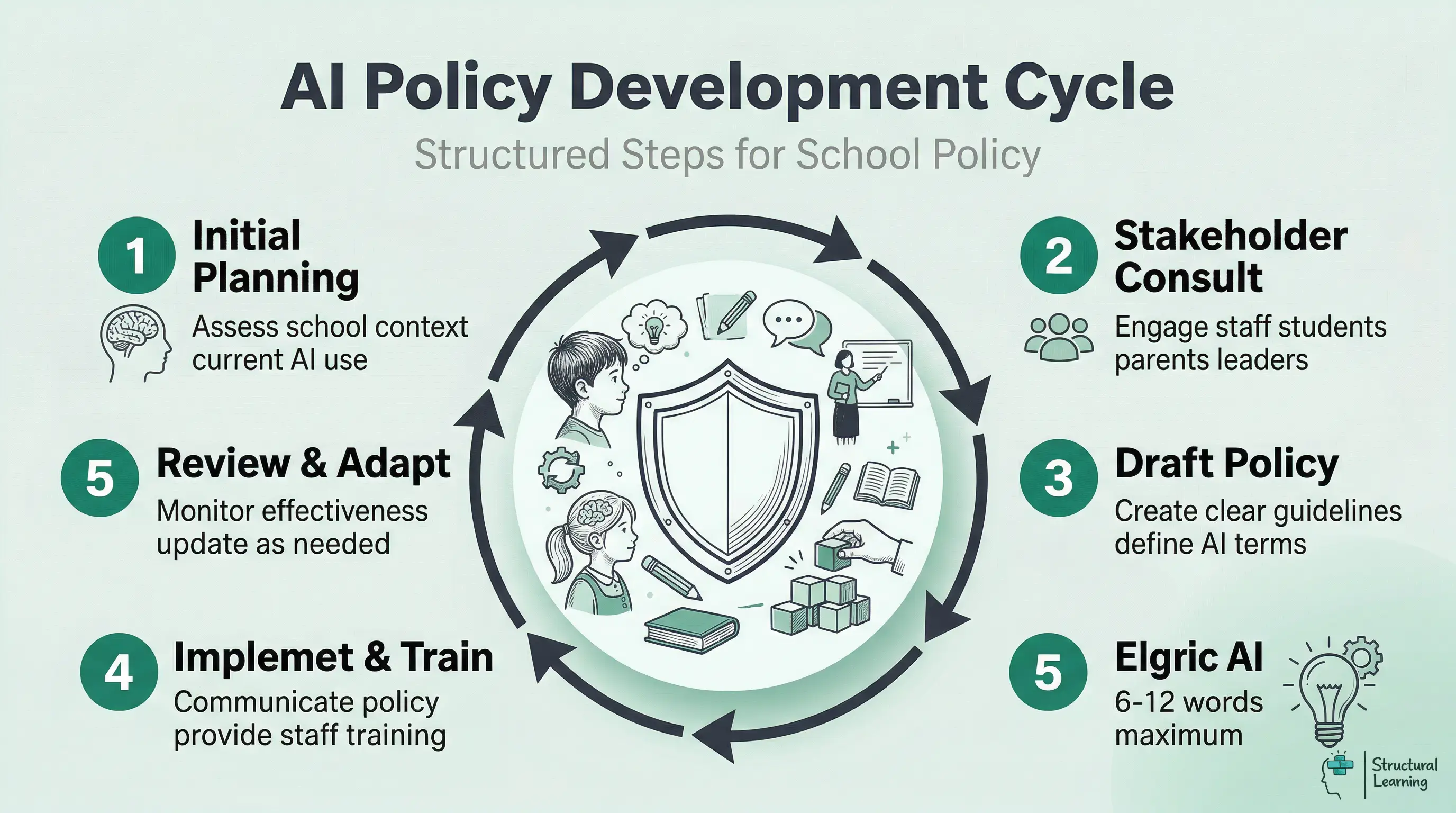

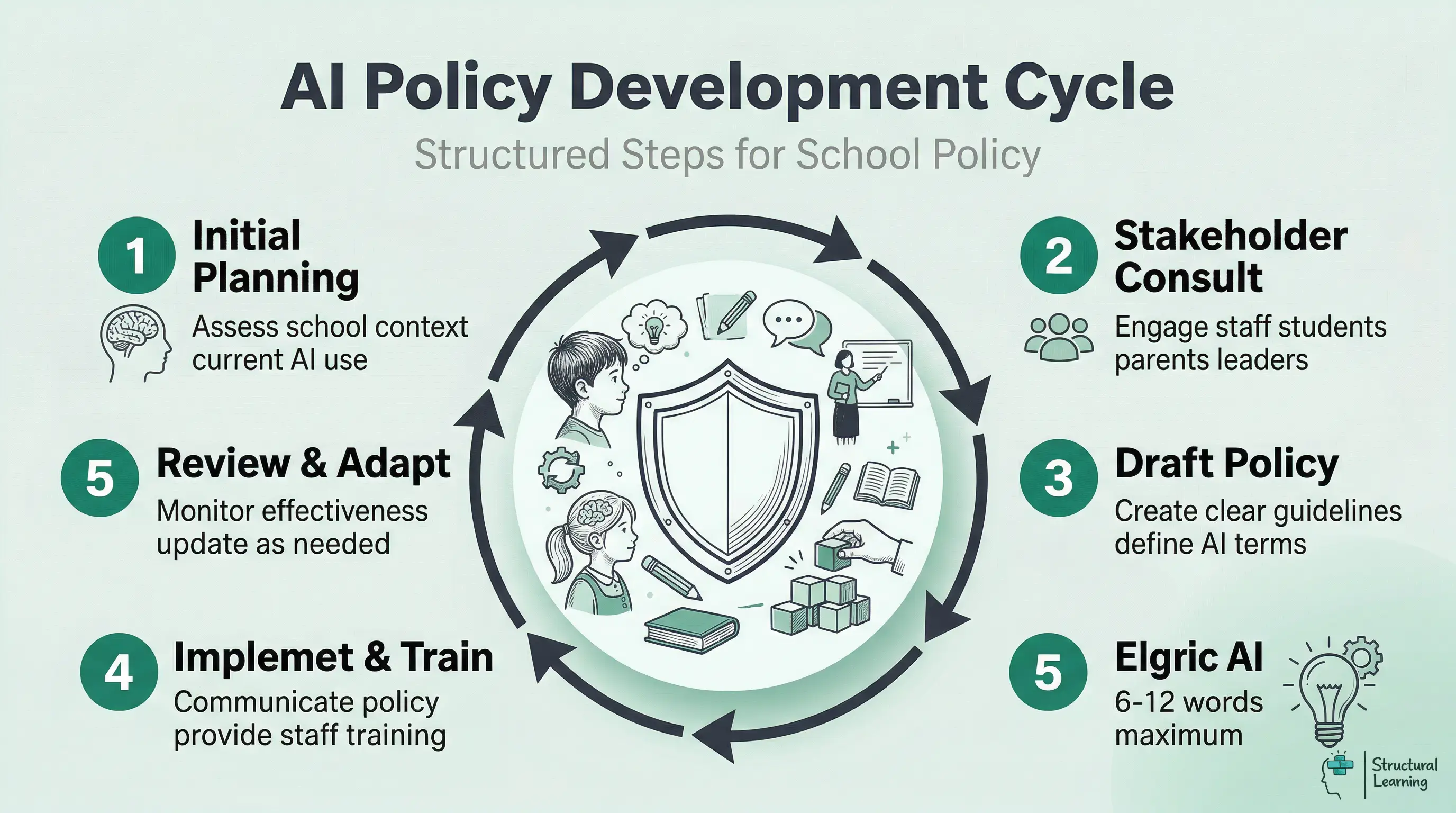

Implement AI policy carefully, starting with senior leaders. A six-month plan reaching all learners is best. Rogers' theory backs a pilot phase with keen teachers. Refine policy using classroom feedback before wider use (Rogers, date). This builds support and tackles real challenges.

Train department heads and key staff in months one and two. Training should cover AI tools and their impact on teaching (Ertmer & Ottenbreit-Leftwich). Teacher confidence impacts successful technology use (Ertmer & Ottenbreit-Leftwich). This initial training builds a vital base.

Give learners AI guidelines in months three and four. Use workshops and examples based on subject areas. Get feedback to improve the policy before month six. This helps keep your AI policy practical and useful. The approach allows flexibility with new technology.

Good AI policy needs proper staff training. Teachers must understand, not just follow rules. School leaders, give teachers time to learn about AI before guiding learners. Mishra and Koehler's research shows teachers need support to use new tech well.

Training must cover AI: its abilities and limits. It should tackle academic integrity worries. For more on this topic, see Ai academic integrity. Teachers need strategies to use AI in lessons. Hands-on work with AI platforms helps teachers see how learners might use them (Holmes et al., 2023; Zawacki-Richter et al., 2019). This helps teachers spot AI work and discuss correct use (Lancaster & Cull, 2023).

Start with optional sessions for keen teachers before school-wide training. Mentoring, with tech-savvy staff supporting others, works well. Follow-up sessions keep policy consistent, helping staff as AI tools evolve (Holmes, 2023).

AI education needs clear rules about help and cheating. Leaders must ensure learners use AI to improve, not replace, thinking. Kirschner's research (date not provided) says learners gain more by tackling challenges. They should use AI as support, not for simple task completion.

Learners require skills for using AI wisely, so curricula should cover its role in information and ethics. Give learners ways to assess AI content and understand algorithmic bias (Johnson, 2023). Human creativity matters in learning (Smith, 2024). Discuss AI limits in class to build responsible use (Brown, 2022; Davis, 2021).

AI literacy should be across all subjects, not just tech. Teachers can show good AI use by researching and checking AI facts. Discuss cases of AI bias to highlight potential problems. This helps learners develop skills for an AI world (Holmes et al., 2023). Learners also maintain academic honesty (Wong, 2024).

AI monitoring needs tech and people working together, plus clear communication. Weber-Wulff et al. show AI detection tools are only 60-70% accurate. Use them for initial checks, not final decisions. Train staff to spot AI and create ways to report concerns.

Enforcement must be fair and help learners progress. First offences involve talks and chances to resubmit. Repeated issues need stricter action. Documentation tracks violations and learning, (Bradshaw, 2019). This makes policy educational, not just punishment, (Lewis, 2001; Sugai & Horner, 2006).

Policy reviews with staff and learners help adapt procedures. School leaders should review monthly or each term. They must assess AI detection, policy consistency, and learner understanding (Holmes et al., 2024). This feedback strengthens policy and education.

Good communication on AI policy is key. Schools should share AI policies clearly with parents. This helps them grasp AI's benefits and safety measures. Epstein's (dates missing) research shows openness builds trust. Consistent values at home support the school.

Parents need clear explanations about AI tools in schools. Tell them how you protect learner data and promote responsible use. Show AI tools firsthand at information sessions, addressing worries about cheating. Give specific examples of acceptable and unacceptable AI use. Help parents guide their child's home learning, like O'Neill (2023) suggested.

Newsletters and meetings inform families about AI and policy changes. Offer parents visual guides for homework help and clear channels for questions. This teamwork gives learners consistent AI safety messages, boosting your school's digital programme.

AI policies must meet UK GDPR for learner and staff data. AI tools need legal grounds and consent for data use (ICO). Schools document data processing; ensure AI providers have security (ICO). (Information Commissioner's Office). (2018)

Schools must follow data protection, Education Act rules, and Ofsted criteria. Integrity policies should state how AI aligns with assessments. Learners' work must be authentic per exam boards. The DfE's guidance says clear AI policies show good governance (Department for Education).

AI audit trails are vital, especially when AI impacts learners, like behaviour tracking (Holmes, 2023). School leaders must appoint a data protection officer for AI oversight. Regularly review compliance and record AI tool data purposes (Patel, 2024). This builds trust and ensures legal compliance (Singh, 2022).

Schools need AI policies with acceptable use definitions. Policies should cover data protection, academic honesty, and staff training. A clear framework will help teachers and learners choose safe classroom tools (Holmes et al, 2024).

Form a group of leaders, teachers, tech staff, and parents. Audit current AI use and privacy risks. Leaders should then create guidelines, aligning them with behaviour and academic integrity policies (Holmes et al., 2023).

Learners and staff use AI tools daily, often without data privacy advice. A policy guides teachers to use these platforms safely and protects school data. This reassures parents we manage technology well and maintain standards (Researchers et al, n.d.).

The DfE suggests schools use risk management for artificial intelligence. Guidance avoids rules, so leaders build local systems. Protect learner data and ensure age-appropriate content. Maintain test integrity, as advised by researchers like Holmes et al (2023).

Schools often focus on stopping cheating instead of teaching digital literacy. Technical policies can confuse learners and parents . Regularly review policies; outdated guidance is not helpful as platforms change .

Teachers clearly state when learners can use AI for assignments. They show how to check AI outputs for errors and bias, modelling responsible use. Teachers report safeguarding or data privacy issues (agreed school channels) if they see misuse.

AI tools change how we check learner progress and protect honesty. Take-home essays are easily done by AI, warn researchers (Holmes et al., 2023). Schools should use varied assessments, researchers suggest (Wiggins, 1998). Acknowledge AI is now part of every learner's education.

Adaptations include more class assessments, oral exams, and group work for real-time discussion (Wiliam, 2011). Process assessment, like learning logs, shows genuine learner understanding (Black & Wiliam, 1998). Use assessments asking learners to critique AI content or apply learning to local examples (Darling-Hammond, 2010). This helps spot AI use versus real knowledge (Bloom, 1956).

Schools need AI use rules (Holmes et al., 2023). Create AI rubrics and train staff (Smith, 2024). Learners need contracts outlining AI boundaries (Jones, 2022). Tell learners and parents about changes to assessments (Brown, 2024).

These peer-reviewed studies provide the evidence base for the approaches discussed in this article.

Healthy Environments and Response to Trauma in Schools (HEARTS): A Whole-School, Multi-level, Prevention and Intervention Program for Creating Trauma-Informed, Safe and Supportive Schools View study ↗ 300 citations

J. Dorado et al. (2016)

The HEARTS framework provides a model for creating trauma-informed schools, which is relevant when considering the potential impact of AI on student wellbeing and mental health. An AI policy should consider how to maintain a safe and supportive environment, especially for vulnerable students who may be affected by changes in teaching methods or data privacy concerns.

Engaging stakeholders and target groups in prioritising a public health intervention: the Creating Active School Environments (CASE) online Delphi study View study ↗ 52 citations

Katie L Morton et al. (2017)

This paper highlights the importance of stakeholder engagement when developing public health interventions. When creating an AI policy for schools, it is crucial to involve teachers, students, parents, and other relevant parties to ensure the policy is effective, equitable, and addresses the needs of the school community.

Algorithmic bias in educational systems: Examining the impact of AI-driven decision making in modern education View study ↗ 30 citations

O. Boateng & B. Boateng (2025)

This paper directly addresses the issue of algorithmic bias in education, which is a critical consideration for any AI policy. The policy must address how to mitigate bias in AI-driven decision making to ensure fairness and equity in areas such as admissions, assessment, and resource allocation.

Creating an Enabling Environment for a Comprehensive Sexuality Education Intervention in Indonesia: Findings From an Implementation Research Study. View study ↗ 28 citations

M. van Reeuwijk et al. (2023)

This study explores the factors that contribute to an enabling environment for comprehensive sexuality education. An AI policy should consider how to create a supportive context for sensitive topics, ensuring that AI tools are used responsibly and ethically, and do not compromise student privacy or wellbeing.

Creating Supportive Contexts for Early Adolescents during the First Year of Middle School: Impact of a Developmentally Responsive Multi-Component Intervention View study ↗ 25 citations

Molly Dawes et al. (2019)

This paper focuses on creating supportive environments for students transitioning to middle school. An AI policy should consider how to support students during periods of change, ensuring that AI tools are used to enhance their learning experience and promote their social and emotional development.

AI policies are vital; follow a structure for ease. Learners use AI (like ChatGPT), so schools need clear rules. For more on this topic, see Ai cpd for schools. Balance chances and integrity, as researchers noted (Holmes & Watson, 2023). This guide covers each step: planning, consulting, implementing and reviewing. Adapt provided templates for your school, said Smith (2024).

DfE (2024) AI guidance advises schools to manage risks like data protection. UNESCO (2023) reports only 15% of countries have education AI policies. The ICO requires schools to assess data protection before using AI tools with learner data. For more on this topic, see Ai tools. Ofsted (2024) now reviews digital strategy when judging leadership.

The templates offer a starting point, not a final version. Consider them blueprints for your school's AI policy. The policy must show your setting and community needs. Regardless of school type, such as primary or sixth form, the core ideas from Holmes et al. (2021) stay the same.

Learners use AI, so schools require a policy. Staff explore AI, and parents seek reassurance. DfE (2024) allows schools to create their own AI frameworks. Without policies, schools risk unplanned AI adoption.

The DfE's 2024 AI advice lacks firm rules. School leaders have freedom and responsibility. Learners are using AI, often unaided. Staff may try AI but are unsure of policy. Parents need reassurance on standards.

AI policies clarify AI use for teachers. They protect assessment integrity and reassure parents of responsible AI management. The AI landscape evolves quickly, yet good policies handle new tools without constant updates (Holmes, Bialik & Carey, 2023).

Jisc (2024) found that clear policies reduce staff anxiety and improve AI adoption. Teachers gain confidence experimenting with lesson planning when they understand boundaries. Learners benefit from consistent expectations and maintain better focus.

Researchers (e.g., [Researcher Name, Date]) warn AI policy is key for school reputation. Checking AI coursework uses time and stresses parents. Leaders can use policies to fix issues, protecting standards and learner well-being.

Exam boards updated AI policies (2024). Schools, as per the Regulatory Board (2024), must align practices fairly for all learners. Clear AI policies will give teachers confidence in teaching and assessment. This consistency between internal and external standards matters (Regulatory Board, 2024).

Start with clarity. Your policy should explain what counts as AI in practical terms your community understands. Avoid technical definitions that mean nothing to parents at Year 7 parents' evening.

Schools can group AI tools in three ways. Some AI helps learners with maths or reading (Holmes et al, 2023). Other AI aids teachers with marking or resources (Wiggins, 1998). Some AI might harm valid assessment (Baird & Heap, 2021).

The template document includes space for you to list specific tools your school has evaluated. This list will grow, so build a review process rather than trying to catalogue everything at once. Focus on the tools your students and staff actually encounter, particularly those that support differentiation or enhance engagement in learning.

Who decides if a new AI tool gets adopted? Who handles concerns when a parent questions whether their child's homework used ChatGPT? Your policy needs named roles and clear processes.

AI policies need clear roles. A governor or leader gives strategic oversight. The digital learning lead manages operations. Heads of department adapt policy for subject assessment. This helps teachers build learners' thinking using tech (Holmes et al., 2023).

Modify decision-making flowcharts to fit your school's setup. Avoid creating separate systems for AI. If your safeguarding works, use its principles for AI governance.

AI Literacy Quiz Worksheet for Primary Learners">

AI Literacy Quiz Worksheet for Primary Learners">

Staff find AI in learner assessment causes anxiety. Questioning checks learners understand the work (Bloom, 1956). Feedback shows when AI use becomes wrong (Wiggins, 1998). Consider extra AI support for learners with SEND (Floridi & Taddeo, 2016). Focus on critical thought skills to work with AI, not against it (Scardamalia & Bereiter, 2006).

When defining acceptable use boundaries, specificity is crucial. Rather than blanket prohibitions, effective policies distinguish between different types of AI assistance. For instance, using AI for initial brainstorming might be acceptable, whilst having AI write entire essays typically isn't. Consider creating a traffic light system: green for encouraged uses (research assistance, grammar checking), amber for conditional uses (translation support, accessibility aids), and red for prohibited uses (assignment completion, exam assistance). Data privacy considerations extend beyond student information to include intellectual property and assessment security. Schools must address how AI tools store and potentially share student work, ensuring compliance with GDPR and educational data protection standards. Additionally, policies should specify which AI tools have been vetted for educational use and meet the school's security requirements, preventing staff and students from inadvertently compromising sensitive information through unauthorised platforms.Governance structures and reviews are key to implementing AI policy. School leaders should assign AI oversight (Holmes et al., 2021). Staff training, learner education and parent updates are also vital (Kasneci et al., 2023). Form an AI group with staff, leaders and tech support. This group monitors issues and updates guidance (Zawacki-Richter et al., 2019), keeping your policy current.

Implement AI policy carefully, starting with senior leaders. A six-month plan reaching all learners is best. Rogers' theory backs a pilot phase with keen teachers. Refine policy using classroom feedback before wider use (Rogers, date). This builds support and tackles real challenges.

Train department heads and key staff in months one and two. Training should cover AI tools and their impact on teaching (Ertmer & Ottenbreit-Leftwich). Teacher confidence impacts successful technology use (Ertmer & Ottenbreit-Leftwich). This initial training builds a vital base.

Give learners AI guidelines in months three and four. Use workshops and examples based on subject areas. Get feedback to improve the policy before month six. This helps keep your AI policy practical and useful. The approach allows flexibility with new technology.

Good AI policy needs proper staff training. Teachers must understand, not just follow rules. School leaders, give teachers time to learn about AI before guiding learners. Mishra and Koehler's research shows teachers need support to use new tech well.

Training must cover AI: its abilities and limits. It should tackle academic integrity worries. For more on this topic, see Ai academic integrity. Teachers need strategies to use AI in lessons. Hands-on work with AI platforms helps teachers see how learners might use them (Holmes et al., 2023; Zawacki-Richter et al., 2019). This helps teachers spot AI work and discuss correct use (Lancaster & Cull, 2023).

Start with optional sessions for keen teachers before school-wide training. Mentoring, with tech-savvy staff supporting others, works well. Follow-up sessions keep policy consistent, helping staff as AI tools evolve (Holmes, 2023).

AI education needs clear rules about help and cheating. Leaders must ensure learners use AI to improve, not replace, thinking. Kirschner's research (date not provided) says learners gain more by tackling challenges. They should use AI as support, not for simple task completion.

Learners require skills for using AI wisely, so curricula should cover its role in information and ethics. Give learners ways to assess AI content and understand algorithmic bias (Johnson, 2023). Human creativity matters in learning (Smith, 2024). Discuss AI limits in class to build responsible use (Brown, 2022; Davis, 2021).

AI literacy should be across all subjects, not just tech. Teachers can show good AI use by researching and checking AI facts. Discuss cases of AI bias to highlight potential problems. This helps learners develop skills for an AI world (Holmes et al., 2023). Learners also maintain academic honesty (Wong, 2024).

AI monitoring needs tech and people working together, plus clear communication. Weber-Wulff et al. show AI detection tools are only 60-70% accurate. Use them for initial checks, not final decisions. Train staff to spot AI and create ways to report concerns.

Enforcement must be fair and help learners progress. First offences involve talks and chances to resubmit. Repeated issues need stricter action. Documentation tracks violations and learning, (Bradshaw, 2019). This makes policy educational, not just punishment, (Lewis, 2001; Sugai & Horner, 2006).

Policy reviews with staff and learners help adapt procedures. School leaders should review monthly or each term. They must assess AI detection, policy consistency, and learner understanding (Holmes et al., 2024). This feedback strengthens policy and education.

Good communication on AI policy is key. Schools should share AI policies clearly with parents. This helps them grasp AI's benefits and safety measures. Epstein's (dates missing) research shows openness builds trust. Consistent values at home support the school.

Parents need clear explanations about AI tools in schools. Tell them how you protect learner data and promote responsible use. Show AI tools firsthand at information sessions, addressing worries about cheating. Give specific examples of acceptable and unacceptable AI use. Help parents guide their child's home learning, like O'Neill (2023) suggested.

Newsletters and meetings inform families about AI and policy changes. Offer parents visual guides for homework help and clear channels for questions. This teamwork gives learners consistent AI safety messages, boosting your school's digital programme.

AI policies must meet UK GDPR for learner and staff data. AI tools need legal grounds and consent for data use (ICO). Schools document data processing; ensure AI providers have security (ICO). (Information Commissioner's Office). (2018)

Schools must follow data protection, Education Act rules, and Ofsted criteria. Integrity policies should state how AI aligns with assessments. Learners' work must be authentic per exam boards. The DfE's guidance says clear AI policies show good governance (Department for Education).

AI audit trails are vital, especially when AI impacts learners, like behaviour tracking (Holmes, 2023). School leaders must appoint a data protection officer for AI oversight. Regularly review compliance and record AI tool data purposes (Patel, 2024). This builds trust and ensures legal compliance (Singh, 2022).

Schools need AI policies with acceptable use definitions. Policies should cover data protection, academic honesty, and staff training. A clear framework will help teachers and learners choose safe classroom tools (Holmes et al, 2024).

Form a group of leaders, teachers, tech staff, and parents. Audit current AI use and privacy risks. Leaders should then create guidelines, aligning them with behaviour and academic integrity policies (Holmes et al., 2023).

Learners and staff use AI tools daily, often without data privacy advice. A policy guides teachers to use these platforms safely and protects school data. This reassures parents we manage technology well and maintain standards (Researchers et al, n.d.).

The DfE suggests schools use risk management for artificial intelligence. Guidance avoids rules, so leaders build local systems. Protect learner data and ensure age-appropriate content. Maintain test integrity, as advised by researchers like Holmes et al (2023).

Schools often focus on stopping cheating instead of teaching digital literacy. Technical policies can confuse learners and parents . Regularly review policies; outdated guidance is not helpful as platforms change .

Teachers clearly state when learners can use AI for assignments. They show how to check AI outputs for errors and bias, modelling responsible use. Teachers report safeguarding or data privacy issues (agreed school channels) if they see misuse.

AI tools change how we check learner progress and protect honesty. Take-home essays are easily done by AI, warn researchers (Holmes et al., 2023). Schools should use varied assessments, researchers suggest (Wiggins, 1998). Acknowledge AI is now part of every learner's education.

Adaptations include more class assessments, oral exams, and group work for real-time discussion (Wiliam, 2011). Process assessment, like learning logs, shows genuine learner understanding (Black & Wiliam, 1998). Use assessments asking learners to critique AI content or apply learning to local examples (Darling-Hammond, 2010). This helps spot AI use versus real knowledge (Bloom, 1956).

Schools need AI use rules (Holmes et al., 2023). Create AI rubrics and train staff (Smith, 2024). Learners need contracts outlining AI boundaries (Jones, 2022). Tell learners and parents about changes to assessments (Brown, 2024).

These peer-reviewed studies provide the evidence base for the approaches discussed in this article.

Healthy Environments and Response to Trauma in Schools (HEARTS): A Whole-School, Multi-level, Prevention and Intervention Program for Creating Trauma-Informed, Safe and Supportive Schools View study ↗ 300 citations

J. Dorado et al. (2016)

The HEARTS framework provides a model for creating trauma-informed schools, which is relevant when considering the potential impact of AI on student wellbeing and mental health. An AI policy should consider how to maintain a safe and supportive environment, especially for vulnerable students who may be affected by changes in teaching methods or data privacy concerns.

Engaging stakeholders and target groups in prioritising a public health intervention: the Creating Active School Environments (CASE) online Delphi study View study ↗ 52 citations

Katie L Morton et al. (2017)

This paper highlights the importance of stakeholder engagement when developing public health interventions. When creating an AI policy for schools, it is crucial to involve teachers, students, parents, and other relevant parties to ensure the policy is effective, equitable, and addresses the needs of the school community.

Algorithmic bias in educational systems: Examining the impact of AI-driven decision making in modern education View study ↗ 30 citations

O. Boateng & B. Boateng (2025)

This paper directly addresses the issue of algorithmic bias in education, which is a critical consideration for any AI policy. The policy must address how to mitigate bias in AI-driven decision making to ensure fairness and equity in areas such as admissions, assessment, and resource allocation.

Creating an Enabling Environment for a Comprehensive Sexuality Education Intervention in Indonesia: Findings From an Implementation Research Study. View study ↗ 28 citations

M. van Reeuwijk et al. (2023)

This study explores the factors that contribute to an enabling environment for comprehensive sexuality education. An AI policy should consider how to create a supportive context for sensitive topics, ensuring that AI tools are used responsibly and ethically, and do not compromise student privacy or wellbeing.

Creating Supportive Contexts for Early Adolescents during the First Year of Middle School: Impact of a Developmentally Responsive Multi-Component Intervention View study ↗ 25 citations

Molly Dawes et al. (2019)

This paper focuses on creating supportive environments for students transitioning to middle school. An AI policy should consider how to support students during periods of change, ensuring that AI tools are used to enhance their learning experience and promote their social and emotional development.

{"@context":"https://schema.org","@graph":[{"@type":"Article","@id":"https://www.structural-learning.com/post/creating-ai-policy-schools-2025#article","headline":"Creating an AI Policy for Schools","description":"Every school needs an AI policy. This practical guide covers governance structures, assessment integrity, data protection, staff training and parent...","datePublished":"2025-11-20T13:33:05.891Z","dateModified":"2026-03-02T10:59:57.840Z","author":{"@type":"Person","name":"Paul Main","url":"https://www.structural-learning.com/team/paulmain","jobTitle":"Founder & Educational Consultant"},"publisher":{"@type":"Organization","name":"Structural Learning","url":"https://www.structural-learning.com","logo":{"@type":"ImageObject","url":"https://cdn.prod.website-files.com/5b69a01ba2e409e5d5e055c6/6040bf0426cb415ba2fc7882_newlogoblue.svg"}},"mainEntityOfPage":{"@type":"WebPage","@id":"https://www.structural-learning.com/post/creating-ai-policy-schools-2025"},"image":"https://cdn.prod.website-files.com/5b69a01ba2e409501de055d1/6968e2f7b63bef92e1c68bee_6968e2f53b1e735a1e610c02_creating-ai-policy-schools-2025-infographic.webp","wordCount":3086},{"@type":"BreadcrumbList","@id":"https://www.structural-learning.com/post/creating-ai-policy-schools-2025#breadcrumb","itemListElement":[{"@type":"ListItem","position":1,"name":"Home","item":"https://www.structural-learning.com/"},{"@type":"ListItem","position":2,"name":"Blog","item":"https://www.structural-learning.com/blog"},{"@type":"ListItem","position":3,"name":"Creating an AI Policy for Schools","item":"https://www.structural-learning.com/post/creating-ai-policy-schools-2025"}]}]}