AI Chatbots and Self-Regulated Learning: Why Student Use Often Backfires

When students use ChatGPT, Claude, or Gemini for homework, the surface story is positive: they get unstuck quickly, the work gets done, the grades hold up.

When students use ChatGPT, Claude, or Gemini for homework, the surface story is positive: they get unstuck quickly, the work gets done, the grades hold up.

When students use ChatGPT, Claude, or Gemini for homework, the surface story is positive: they get unstuck quickly, the work gets done, the grades hold up. The deeper story is that the cognitive work which builds self-regulated learning, the planning, monitoring, and reflection cycle, is being silently outsourced to the chatbot. Without explicit teaching, learners learn where to get an answer rather than how to construct one. The term describes a structured process for turning evidence into a classroom decision, not a label on its own.

This article explains why unrestricted AI chatbot use can weaken self-regulated learning. It also sets out what cognitive offloading research actually shows. Finally, it shows how to redesign tasks so AI acts as a feedback partner rather than an answer generator.

It is tempting to let learners use AI freely because the lesson appears to run more smoothly. A learner stuck on a quadratic equation can ask Claude, receive a worked solution, and move on. The teacher gains time. The task is finished. The learning, however, may not be.

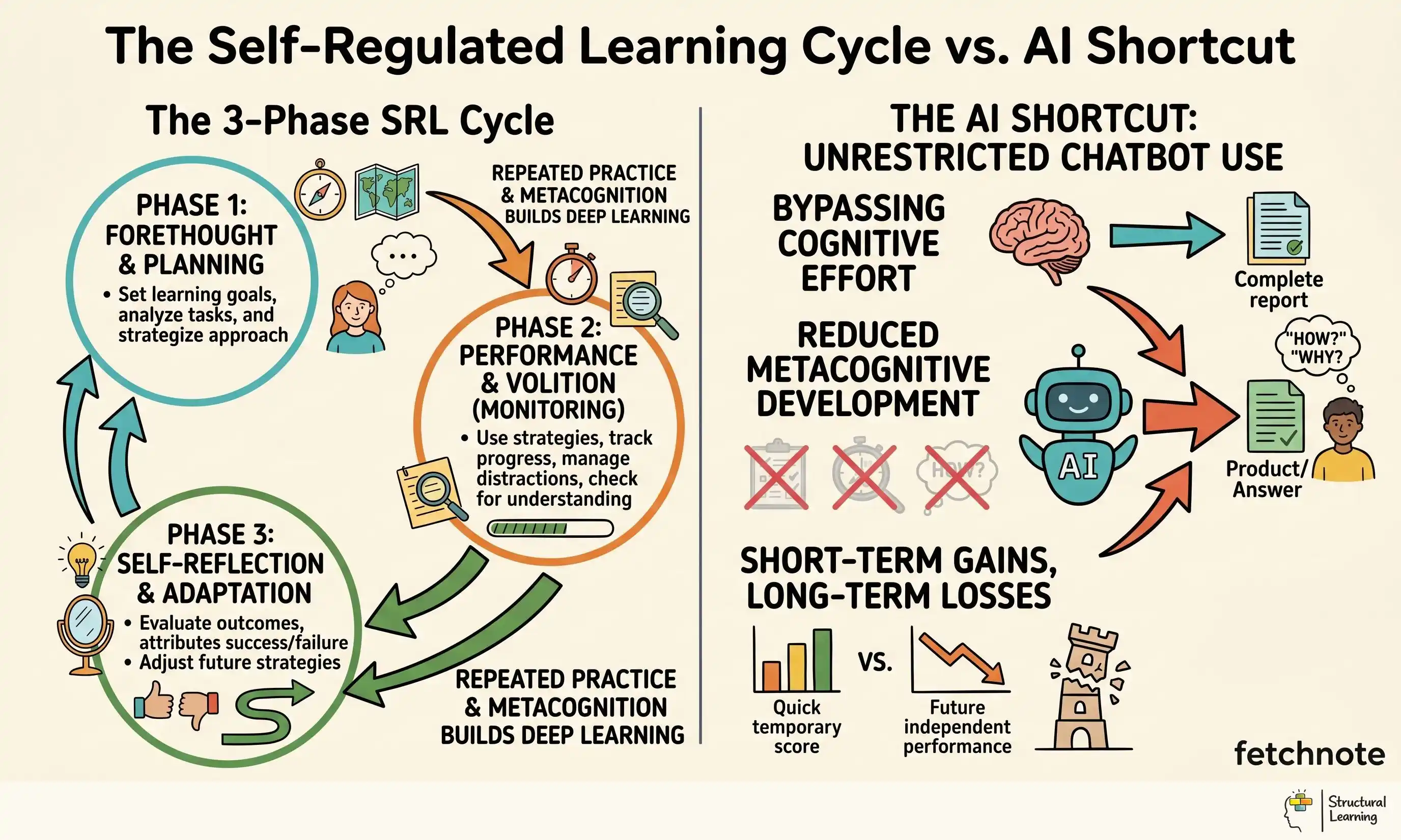

The problem is that task completion is being confused with learning. Zimmerman (2000) defined self-regulated learning as a cycle of forethought, performance and self-reflection, but that model was not written for a hyper-responsive conversational agent that can resolve confusion before the learner has monitored it. Molenaar (2022) shows why AI can either share regulation with the learner or take it over. When the chatbot performs the work, the learner has less reason to plan, monitor effort or judge the quality of their own answer.

This matters because self-regulated learning helps learners become independent over time. Hattie's original synthesis reported a high effect for metacognitive strategies (d = 0.69). Later Visible Learning updates also place self-regulation and metacognitive strategy use in the high-impact family of influences (Hattie, 2009; Hattie, 2023). The learners who cope best at A-level, university and work can plan, notice confusion, and respond to it. When AI acts as an answer generator, learners lose practice in exactly those habits.

Classroom example: Year 10 learner Hassan has a history essay on the causes of the First World War. Without AI, he reads three sources, struggles to connect them, and writes a flawed but recognisably his own argument. With unrestricted ChatGPT access, he produces a polished 800-word essay in twelve minutes. The mark is higher, but the cognitive practice of building an argument from sources, the skill the essay was meant to develop, has not happened.

Cognitive offloading is the use of external aids (calendars, search engines, AI) to reduce the cognitive demand on working memory. Risko and Gilbert (2016) reviewed 40 studies on the topic and found a consistent pattern. When the cost of offloading is low and the cost of thinking in your head is high, people offload. They also found that offloaded knowledge is recalled less reliably than knowledge processed internally.

Sparrow, Liu and Wegner (2011) ran a foundational study on what they called the "Google effect": learners who were told that information would be stored on a computer remembered the information itself less well, but remembered the location where it was stored more accurately. The brain chooses for the easier task. AI chatbots are an extreme form of cognitive offloading. They don't just store information. They also do the thinking work (synthesise, summarise, argue) that learners would otherwise do themselves.

Storm and Stone (2014) showed that the offloading effect is not limited to memory. Learners who used external aids to solve problems were less able to solve similar problems without help afterwards. Generative AI adds a further complication: the aid is dialogic, so it can question, praise and steer the learner as well as store information. That makes teacher design more important, not less (Yan et al., 2024).

There is a counter-argument from the "extended mind" literature (Clark and Chalmers, 1998) that external cognitive aids are a legitimate part of thinking, not a corruption of it. The counter-argument has merit when the goal is task completion. It has limits when the goal is the development of the thinking apparatus itself, which is the goal of education.

When learners use chatbots for academic work, teachers are starting to see common patterns. Classroom observations and new school reports often describe four of them: Use it as a starting point for professional discussion: identify the learner's current need, record evidence from more than one lesson, and agree the next classroom adjustment with the SENCO or family.

These patterns are not equally harmful. Prompting, checking and revising can be metacognitive when the learner sets a goal, tests the output and decides what to change. The risk is unstructured use: direct answers, polish-and-submit and uncritical confirmation hand the monitoring decision to the chatbot. The fourth pattern is especially misleading because it looks as if the learner is working, while the judgement "am I right?" has been delegated.

Classroom example: Year 12 learner Olivia uses ChatGPT for confirmation-seeking on chemistry calculations. Her homework marks look strong for two terms, but the mock exam, with no AI, exposes the gap. This is phantom attainment: formative assessment records show fluent work while the learner has not practised checking, estimating or correcting her own answer. A large high-school maths experiment found the same risk: unguarded GPT-4 access improved practice performance but reduced later unassisted performance (Bastani et al., 2025).

The answer is not a blanket ban. The safer route is to design tasks where AI is positioned as a feedback partner rather than a content generator. Three concrete redesigns are useful:

Learners must produce a hand-written or hand-typed first draft before any AI use is permitted. They then submit the draft to the AI with the prompt: "Find three weaknesses in this argument and suggest counter-evidence." The learner responds to the AI's challenges in a second draft. The AI does not write the content; it challenges the content.

This preserves the planning, performance and reflection phases of SRL because the learner's own thinking generates the input that the AI then critiques.

Learners are given an AI-generated answer to a question they have not yet attempted. They must verify the answer against three primary sources, identifying any claims the AI made that the sources do not support. The cognitive work is in the verification, not the generation.

This pattern teaches AI literacy in a clear way. AI output can sound confident, but it may also be biased or unsupported. The learner must build the habit of checking evidence, rather than accepting fluent writing as truth (Kasneci et al., 2023; UNESCO, 2023).

Learners use the AI exclusively for metacognitive reflection prompts: "Ask me three questions that would help me understand this concept more deeply." "What is the most likely misconception a learner would have about this topic?" "What question should I be asking myself before I write this paragraph?"

This pattern only works when the prompt is a metacognitive question, not a bid to make the machine comply. Teaching prompt engineering as a generic future skill can train learners to tune prompts until the chatbot produces a smoother answer, which is different from planning, monitoring and judging their own work. Lodge, de Barba and Broadbent argue that generative AI sits inside a network of regulation, so evaluative judgement must stay with the learner rather than the tool (Lodge et al., 2023).

Classroom example: Year 9 English teacher Mr Chen replaces the standard "write an essay on Macbeth's ambition" task with a draft-then-challenge version. Learners write 400 words by hand in class, then take their draft home and prompt the AI: "What are three weaknesses in this argument?" In the next lesson, they respond to the AI's critiques in a second draft. Essay quality improves; more importantly, learners practise evaluating their own argument and responding to critique.

For neurodivergent learners who have executive function challenges, including ADHD, autism and working memory difficulties, AI help can make more sense in some cases. Risko and Gilbert (2016) noted that cognitive offloading, or using an outside aid, is more useful when the mental load is too high for the learner. A learner with severe working memory limits may need external scaffolds before they can access the curriculum at all.

The redesign for SEND learners is to use AI as a clear external support system. It can act as a checklist generator, a reading plan creator, or a "what-step-comes-next" prompt. The AI supports cognitive operations the learner cannot yet manage internally, while the curriculum content stays intact. This fits the principle that scaffolding makes learning processes accessible rather than simplifying content (Wood, Bruner and Ross, 1976).

SEND learners face the same risk as other learners: AI can complete the thinking that school is trying to develop. The difference is the threshold. For some learners, external planning and sequencing are access supports before they are independence risks.

"My learners will fall behind if I restrict AI use." They may fall behind in task completion, not in learning. The marks they earn through unsupported AI use do not necessarily reflect skill they will retain.

"Detection tools solve the problem." Detection tools are unreliable and treat AI use only as cheating rather than as a misuse of a tool. The framing is wrong: learners need to learn when AI helps and when it harms, not just whether they will be caught.

"AI literacy is a separate subject." AI literacy belongs inside every subject, but it should not collapse into prompt engineering. A learner who can make a chatbot produce a compliant paragraph has not necessarily learnt to plan, monitor or evaluate. The stronger classroom question is: "what cognitive work did I keep, and what did I hand over?"

"Younger children should be insulated from AI entirely." The evidence base does not support this as a complete policy. Young learners face the same risk as older learners: using AI to avoid thinking prevents skill development. They also need clear teaching about how to evaluate AI output, which they will encounter outside school anyway.

Three limitations of the current evidence base are worth flagging. Use it as a starting point for professional discussion: identify the learner's current need, record evidence from more than one lesson, and agree the next classroom adjustment with the SENCO or family.

First, almost all of the cognitive offloading research predates the current generation of large language models. Sparrow et al. (2011) and Risko and Gilbert (2016) studied calendars, search engines, and notebooks, not generative AI. The mechanism is plausibly the same, but the magnitude of the effect for AI specifically is not yet well characterised.

Second, classroom research on AI use is in its infancy. Walter (2024) and Bearman et al. (2024) provide observational data but rarely with controlled comparisons. The conclusions in this article should be read as best current understanding, subject to revision as more rigorous evidence emerges.

Third, equity cuts both ways. Learners with private tutors have always had one-to-one cognitive support, and AI can widen access to that kind of help. But the metacognitive digital divide is real: affluent learners may use paid models as Socratic tutors, while disadvantaged learners may rely on free tools as blunt answer generators. The answer is to teach AI use well, with shared school routines, not to leave access and skill to family income (OECD, 2024; Selwyn, 2024).

Scaffolded. Self-regulated. Free for teachers.

In your next lesson, run one of the three task redesigns above on a single piece of work. The draft-then-challenge pattern is the easiest place to start. Tell learners explicitly that the goal is not to get the right answer but to practise the cognitive skill of challenging their own argument. Compare what you observe in the classroom and in the resulting work to a similar task without the redesign. The difference will tell you whether AI in your classroom is building learners or building dependency.

These peer-reviewed studies provide the evidence base for the strategies discussed above.

Learning analytics dashboards: What do students actually ask for? View study ↗

15 citations

Divjak et al. (2023)

This study examines what students actually want from learning analytics dashboards that track their academic progress. For teachers, understanding student preferences for data visualisation and feedback can help design more effective digital tools that genuinely support self-regulated learning rather than overwhelming students with irrelevant information.

Applying social cognition to feedback chatbots: Enhancing trustworthiness through politeness View study ↗

15 citations

Brummernhenrich et al. (2025)

Research shows that making AI chatbots more polite increases student trust and engagement with the technology. Teachers should consider how they frame AI interactions in their classrooms, as the perceived trustworthiness of chatbots directly affects whether students will use them effectively for learning support.

The who, why, and how of ai-based chatbots for learning and teaching in higher education: A systematic review View study ↗

34 citations

Ma et al. (2024)

This systematic review analyses who uses AI chatbots in higher education, why they use them, and how they're implemented. It provides teachers with evidence-based insights into effective chatbot integration strategies and highlights potential pitfalls to avoid when incorporating AI tools into their teaching practice.

Human-AI collaborative learning in mixed reality: Examining the cognitive and socio-emotional interactions View study ↗

22 citations

Dang et al. (2025)

This study explores how students learn when collaborating with AI in virtual reality environments, examining both cognitive and emotional responses. Teachers can use these findings to better understand how AI tools affect student thinking processes and social interactions in technology-enhanced learning environments.

Beyond the Hype: Towards a Critical Debate About AI Chatbots in Swedish Higher Education View study ↗

16 citations

Pargman et al. (2024)

Swedish researchers argue that higher education needs critical discussion about AI chatbots rather than uncritical adoption. This paper encourages teachers to question why they're using AI tools and whether these technologies truly align with educational goals, promoting thoughtful implementation over trend-following.

Free for teachers. The platform builds a classroom-ready lesson plan from your topic in under two minutes.

Create Free Account →

Bastani et al. (2025).

Clark and Chalmers (1998).

Lodge et al. (2023).

Yan et al. (2024).